Delivering Business Services through modern practices and technologies. -- Cloud, DevOps and As-a-Service.

Tuesday, April 26, 2011

Private Cloud Provisioning Templates

Sunday, April 24, 2011

Private Cloud Provisioning & Configuration

Tuesday, April 05, 2011

Are Enterprise Architects Intimidated by the Cloud?

Monday, April 04, 2011

Cloud.com offers Amazon API

"CloudBridge provides a compatibility layer for CloudStack cloud computing software that tools designed for Amazon Web Services with CloudStack.

The CloudBridge is a server process that runs as an adjunct to the CloudStack. The CloudBridge provides an Amazon EC2 compatible API via both SOAP and REST web services."

Saturday, April 02, 2011

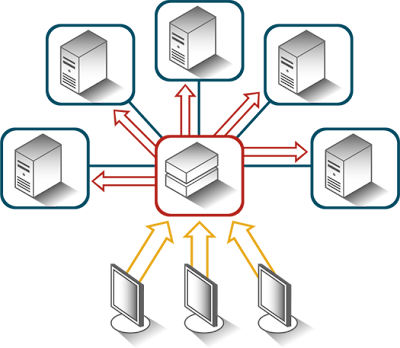

The commoditization of scalability

Wednesday, March 16, 2011

Providing Cloud Service Tiers

In the early days of cloud computing emphasis was placed on 'one size fits all'. However, as our delivery capabilities have increased, we're now able to deliver more product variations where some products provide the same function (e.g., storage) but deliver better performance, availability, recovery, etc. and are priced higher. I.T. must assume that some applications are business critical while others are not. Forcing users to pay for the same class of service across the spectrum is not a viable option. We've spent a good deal of time analyzing various cloud configurations, and can now deliver tiered classes of services in our private clouds.

Reviewing trials, tribulations and successes in implementing cloud solutions, one can separate tiers of cloud services into two categories: 1) higher throughput elastic networking; or 2) higher throughput storage. We leave the third (more CPU) out of this discussion because it generally boils down to 'more machines,' whereas storage and networking span all machines.

Higher network throughput raises complex issues regarding how one structures networks – VLAN or L2 isolation, shared segments and others. Those complexities, and related costs, increase dramatically when adding multiple speed NICS and switches, for instance 10GBase-T, NIC teaming and other such facilities. We will delve into all of that in a future post.

Tiered Storage on Private Cloud

Where tiered storage classes are at issue, cost and complexity is not such a dramatic barrier, unless we include a mix of network and storage (i.e., iSCSI storage tiers). For the sake of simplicity in discussion, let's ignore that and break the areas of tiered interest into: 1) elastic block storage (“EBS”); 2) cached virtual machine images; 3) running virtual machine (“VM”) images. In the MomentumSI private cloud, we've implemented multiple tiers of storage services by adding solid state drives (SSD) drives to each of these areas, but doing so requires matching the nature of the storage usage with the location of the physical drives.

Consider implementing EBS via higher speed SSD drives. Because EBS volumes avail themselves over network channels to remain attachable to various VMs, unless very high speed networks carry the drive signaling and data, a lower speed network would likely not allow dramatic speed improvements normally associated with SSD. Whether one uses ATA over Ethernet (AoE), iSCSI, NFS, or other models to project storage across the VM fabric, even standard SATA II drives, under load could overload a one-gigabit Ethernet segment. However, by exposing EBS volumes on their own 10Gbe network segments, EBS traffic stands a much better chance of not overloading the network. For instance, at MSI we create a second tier of EBS service by mounting SSD on the mount points under which volumes will exist – e.g., /var/lib/eucalyptus/volumes, by default, on a Eucalyptus storage controller. Doing so gives users of EBS volumes the option of paying more for 'faster drives.'

While EBS gives users of cloud storage a higher tier of user storage, the cloud operations also represent a point of optimization, thus tiered service. The goal is to optimize the creation of images, and to spin them up faster. Two particular operations extract significant disk activity in cloud implementation. First, caching VM images on hypervisor mount points. Consider Eucalyptus, which stores copies of kernels, ramdisks (initrd), and Eucalyptus Machine Images (“EMI”) files on a (usually) local drive at the Node Controllers (“NC”). One could also store EMIs on an iSCSI, AoE or NFS, but the same discussion as that regarding EBS applies (apply fast networking with fast drives). The key to the EMI cache is not so much about fast storage (writes), rather rapid reads. For each running instance of an EMI (i.e., a VM), the NC creates a copy of the cached EMI, and uses that copy for spinning up the VM. Therefore, what we desire is very fast reads from the EMI cache, with very fast writes to the running EMI store. Clearly that does not happen if the same drive spindle and head carry both operations.

In our labs, we use two drives to support the higher speed cloud tier operations: one for the cache and one for the running VM store. However, to get a Eucalyptus NC, for instance, to use those drives in the most optimal fashion, we must direct the reads and writes to different disks,– one drive (disk1) dedicated to cache, and one drive (disk2) dedicated to writing/running VM images. Continuing with Eucalyptus as the example setup (though other cloud controllers show similar traits), the NC will, by default, store the EMI cache and VM images on the same drive -- precisely what we don't want for higher tiers of services.

By default, Eucalyptus NCs store running VMs on the mount point /usr/local/eucalyptus/???, where ??? represents a cloud user name. The NC also stores cached EMI files on /usr/local/eucalyptus/eucalyptus/cache -– clearly within the same directory tree. Therefore, unless one mounts another drive (partition, AoE or iSCSI drive, etc.) on /usr/local/eucalyptus/eucalyptus/cache, the NC will create all running images by copying from the EMI cache to the run-space area (/usr/local/eucalyptus/???) on the same drive. That causes significant delays in creating and spinning up VMs. The simple solution: mount one SSD drive on /usr/local/eucalyptus, and then mount a second SSD drive on /usr/local/eucalyptus/eucalyptus/cache. A cluster of Eucalyptus NCs could share the entire SSD 'cache' drive by exposing it as an NFS mount that all NCs mount at /usr/local/eucalyptus/eucalyptus/cache. Consider that the cloud may write an EMI to the cache, due to a request to start a new VM on one node controller, yet another NC might attempt to read that EMI before the cached write completes, due to a second request to spin up that EMI (not an uncommon scenario). There exist a number of ways to solve that problem.

The gist here: by placing SSD drives at strategic points in a cloud, we can create two forms of higher tiered storage services: 1) higher speed EBS volumes; and 2) faster spin-up time. Both create valid billing points, and both can exist together, or separately in different hypervisor clusters. This capability is now available via our Eucalyptus Consulting Services and will soon be available for vCloud Director.

Next up – VLAN, L2, and others for tiered network services.

Monday, March 14, 2011

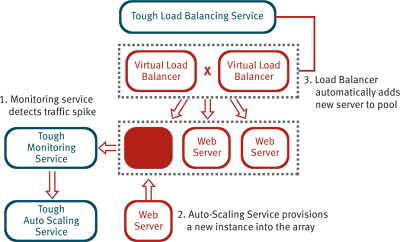

Auto Scaling as a Service

Wednesday, March 09, 2011

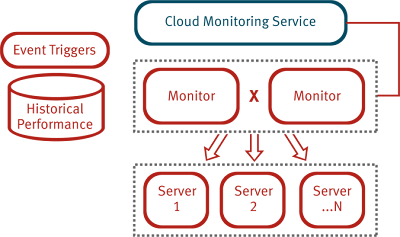

Non-Invasive Cloud Monitoring as a Service

The Tough Cloud Monitoring solution is our next generation offering targeting virtualized workloads, as well as PaaS services, housed in either traditional data centers or private cloud environments.

The Tough Cloud Monitoring solution is our next generation offering targeting virtualized workloads, as well as PaaS services, housed in either traditional data centers or private cloud environments. Tuesday, March 08, 2011

Tough Load Balancing as a Service

Last week, MomentumSI announced the availability of our Tough Load Balancing Service along with a Cloud Monitoring and Auto Scaling solution.

Last week, MomentumSI announced the availability of our Tough Load Balancing Service along with a Cloud Monitoring and Auto Scaling solution. Wednesday, March 02, 2011

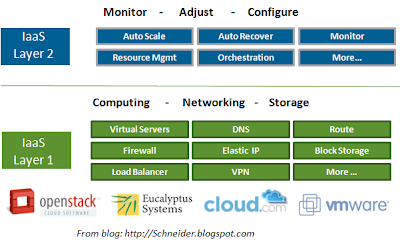

Separating IaaS into Two Layers

Some people call the first layer, "Hardware-as-a-Service". It primarily focuses on the 'virtualization' of hardware enabling better manipulation by the upper layers. This was the core proposition of the original EC2. There are some great vendors in this space like Eucalyptus, Cloud.com and VMware. Cool projects are also emerging out of OpenStack which many of the aforementioned companies hope to adopt and extend.

Friday, February 25, 2011

Amazon CloudFormation Exceeds Expectations

Tuesday, November 23, 2010

Defending the Private Cloud

"Back in January, I made a controversial prediction that private clouds will be discredited by year end. Now, in the eleventh month of the year, the cavalry has arrived to support my prediction, in the form of a white paper published by a most unlikely ally, Microsoft."

Wednesday, August 25, 2010

MomentumSI Partners on Private / Hybrid Cloud

- Improved agility — Deployment cycles shrink from months to minutes, making IT far more responsive to business lines and other internal customers.

- Reduced capital expense — Utilization of hardware capacity improves dramatically due to elastic provisioning and de-provisioning of services.

- Reduced operating costs — Software infrastructure and provisioning processes are standardized and automated. Control is decentralized to decentralized constituents.

- Reduced risk — The controlled cloud provides an alternative to rogue deployments to the public cloud. The ability to move workloads between deployment environments (physical, virtual or cloud) avoids platform lock-in.

- newScale service catalog — www.newScale.com

- rPath release automation — www.rPath.com

- Eucalyptus cloud automation — www.Eucalyptus.com

Tuesday, June 08, 2010

Current challenges for Application Performance Engineering

Application performance engineering is a discipline encompassing expertise, tools, and methodologies to ensure that applications meet their non-functional performance requirements. Performance engineering has understandably become more complex with the rise in multi-tier, distributed applications architectures that include SOA, BPM, SaaS, PaaS, cloud and others. Although performance engineering ideally should be applied across the lifecycle, we’re seeing more factors that unfortunately push it into the production phase, typically to resolve problems that have already gotten out of hand. That clearly a tougher challenge, so how did we get to this point?

In the client-server past, performance optimization was something that folks in the IT department typically figured out through trial and error. Developers learned to write more efficient database queries, database administrators learned to index and cache, and system administrators monitored CPU and memory to upgrade when needed.

As application architectures started to get more complex, the dependencies increased and it was harder for one team track down problems without chasing their tail. More organizations adopted something that was previously only used by enterprises with highly scalable, reliable mission critical applications – the performance testing lab. Vendors like Mercury created popular load testing tools like LoadRunner, and organizations invested millions in lab hardware and software in an attempt to recreate production environments that they could control for testing purposes.

Unfortunately, these performance labs became very difficult to cost justify. First, it always seemed to take too much time and money to setup the realistic test environments you’d like, particularly as apps became more distributed. Next, projects were often already behind schedule when it came time to test, and so lab times often had to be cut short. Factors like these minimized the lab’s value, but the real killer was the high maintenance costs for all that hardware and software, along with the data center and staff.

This put many IT organizations in a tough spot. With limited means to perform system-wide performance testing, and the inclusion of more SaaS/PaaS/cloud services in their architecture, they had to make due with whatever subsystem level performance testing they could get. After that, its finger-crossing and resigning yourself to further optimization in production.

Unfortunately, production can be a very frustrating place to try and optimize performance, particularly when you have performance problems and growing complaints from customers, partners, etc. It’s in these pressured environments where you need true performance engineers that follow a methodical and systematic end-to-end approach. Performance bottlenecks can reside in a myriad of places in highly distributed architectures, and you need to follow a disciplined methodology to analyze dependencies, isolate problem areas, and then leverage the best of breed tools to trace, profile, optimize, etc each of the tiers and technologies in the application delivery path. This takes a lot of skill and expertise.

In short, the challenges faced by today’s application performance engineer in production settings is a far cry from the client-server days of in-house tuning and experimentation. We expect that the role of Performance Engineer will grow in importance as SOA, BPM, cloud, and SaaS/PaaS implementations increase, and until more viable pre-production system performance testing options are available to rise up to the challenge.

Friday, October 23, 2009

SOA Manifesto

Sunday, June 21, 2009

The Case for Expropriated Reuse

Expropriated Reuse is a form of reuse that focuses on the here and now. The goal isn’t to define some new service and hope for ‘accidental reuse’ or even to put forward a case for ‘planned reuse’. Instead, it’s the act of going out and finding redundant code that already exists across multiple systems and turning it into a single shared service. I’ll repeat that: Find redundant code and refactor out the common elements into shared services. This ALWAYS results in multiple consumers (or mandated consumers, if you prefer).

I’ll give a quick example. Over time, company X had ‘accidentally’ created 5 software modules that existed inside of larger applications that all did some form of ‘quoting a price to customers’. This led to 5 teams maintaining it, 5 sets of computer hardware, etc. Expropriated Reuse is the act of going to the applications and cutting out the common elements and turning them into one shared service. Note that this is different than application rationalization or application consolidation that tends to use a nuclear bomb to deal with the problem. We’re recommending sniper firing with a bit of additional precision bombing.

The reasons that we do this are mostly financial. We want to reduce the amount of code that has to be maintained and operated. We want to reduce our costs. There are plenty of other reasons like increased quality, time-to-market, etc. but I’m done with those softies. The case is cold hard cash. Show me how to save money or go away.

IMHO, the new SOA agenda is about expropriated reuse. The SOA Program must actively identify opportunities to make the enterprise software portfolio more efficient and less costly. Just like in city planning exercises we must acknowledge the needs of the community over the needs of the few. And I’m in agreement with Clinton that ‘we should never waste a good crisis’. Reduced I.T. budgets have created a ‘crisis of efficiency’ in virtually all of our clients. The imperative is to find ways to reduce budgets in the short term and over the next 3-5 years.

To quote Todd Biske, “… a common analogy for enterprise architecture these days is that of city planning. … (does) your architecture have more parallels to the hot political potato of eminent domain? Are you having to bulldoze applications that were only built a few years ago whose residents are currently happy for the greater good? What about old town? Any plans for renovation? If you aren’t asking these questions, then are you really embracing enterprise SOA?”

I’ve been wondering: Should all SOA programs that do not have the authority to issue an order of ‘eminent domain’ on the software portfolio be shut down? Does your SOA program have a hunting license to go find inefficiencies / duplicate coding and to issue an order of eminent domain on that code? Can you imagine what our country would look like if we couldn’t issue an order of eminent domain to capture land for our highways, bridges or railroads? Can you imagine if we didn’t have the ability to implement ‘easement by necessity’? Consider your town/neighborhood and think about the following: Railroad easements, storm drain or storm water easements, sanitary sewer easements, electrical power line easements, telephone line easements, fuel gas pipe easements, etc. What a mess it would be. The crisis of budgets must draw out the leaders. If you haven’t already been issued a hunting license, it’s time to go get one.

So I’ll answer my own question. If you’re SOA program is responsible for watching the blinking lights on your newly acquired SOA Management tool, or making sure that people enter their ‘accidental’ services into your flashy registry, I’ll recommend that they shut you down. This is a waste of time. The SOA group must be given the imperative and authority (a hunting license) to find waste in the enterprise and to destroy it. SOA isn’t about policing people on WS-Standards or similar crap – it’s about saving your company millions.

The Case for Planned Reuse

In my last post, I argued that the concept of ‘accidental services’ or ‘build it and they will come’ is a bad idea – because … they typically don’t come. Services that are created with a very specific consumer in mind are typically limited in capability, scope and result in limited reuse.

The MomentumSI Harmony method suggests that service analysis be performed on the first consumer’s needs as well as potential consumers that aren’t in the immediate scope. This is easier said than done. How do you identify the requirements of a service if you have ‘phantom consumers’?? The short answer is that there are techniques that involve looking at UI models, process models, data models and other artifacts that will give you insight into the domain. The result is a list of potential consumers and a plan for their eventual consumption. The point is that there are techniques to help organizations define services according to a plan – and doing so leads to increased reuse and a better software portfolio.

Again, Planned Reuse is most effective when you’re working in a new domain and you don’t already have a bunch of conflicting/overlapping software that exists. The immediate project might call for an ‘Order Service’, but you know that the service will eventually be called by the Web eCommerce system, the call center software, the B2B gateway, etc. Those projects aren’t in scope – but you consider their needs when designing the service.

This is all fine, but what happens when you’re analyzing a service for an immediate project that clearly should be called by existing projects/software? This is the case for Expropriated Reuse.

The Case Against Accidental Reuse

Saturday, December 20, 2008

New Blog on Cloud Computing

http://cloudv.blogspot.com

Friday, November 07, 2008

Nomination for Federal CTO

"Obama will appoint the nation's first Chief Technology Officer (CTO) to ensure that our government and all its agencies have the right infrastructure, policies and services for the 21st century. The CTO will ensure the safety of our networks and will lead an interagency effort, working with chief technology and chief information officers of each of the federal agencies, to ensure that they use best-in-class technologies and share best practices."

I nominate Tim O'Reilly as the first Federal CTO. Do I hear a second??